How do you make sure a radio signal is within acceptable limits for Helium? Short version: Assert your antenna gain (including your cable loss) and location accurately and you don’t need to do anything else.

Wait, you want more? Dawg, why didn’t you say so? Let’s dive in!

First, let’s start with why we need an “acceptable” strength. Three words: Proof Of Coverage. We need to be able to prove that we’re actually providing coverage where we claim we are. This is important for two reasons. First, when businesses hear about the world of IoT and Helium, if we can show them a map of where our coverage has actually been proven to exist and at what strength, they can quickly make a decision regarding whether or not they want to use the Helium Network, and if they need to add a new Hotspot to provide coverage.

Second, we need to prove coverage in an accurate way in order to combat gaming, aka cheating. Understanding how this works requires a little bit of radio theory, but relax, I’ll walk ya through it.

As you read this and the example I give at the end, you’ll come to the understanding that as of now, March 2022, the RSSI limits are gobsmackingly lax. This will change. For now, just bookmark that idea as “work in progress.” Onward!

Let’s start with that signal strength, or RSSI. RSSI stands for Received Signal Strength Indicator, and, as its name indicates, is a measurement of the received signal strength. In order to know what the received signal strength *should* be, we need to know a few things. Those are broken into 3 sections.

1. Beaconer Information

- Beaconer’s transmit power. In the US, most of our hotspots transmit at 27 dBm. Your region may be different.

- Beaconer’s cable loss. (in the Helium app, this is included in the antenna gain section) This is a function of cable efficiency and length. 100′ of LMR400 at 915 MHz will lose 2.2 dB.

- Beaconer’s reported antenna gain.

Knowing the Beaconer’s transmit power comes from knowing what region of the world the Hotspot is in, and what the legal limit is for power output. For example, US915 can blast at 27 dBm while EU868 is limited to 16 dBm.

2. Distance & Region

Over any given distance a radio signal will lose a theoretical amount of strength. The actual amount can change, sometimes drastically, depending on environmental characteristics like vegetation or building obstacles, and to a lessor extent from humidity, rain, snow, sleet, and pollution. The theoretical amount is called FSPL, or Free Space Path Loss. This is the loss in signal strength in “normal” clear air.

3. Receiving (Witness) Information

- Witness’ reported antenna gain

- Witness’ reported cable loss (in the Helium app, cable loss is included in the antenna gain section)

- Witness reported RSSI (at what strength did they “hear” the signal?)

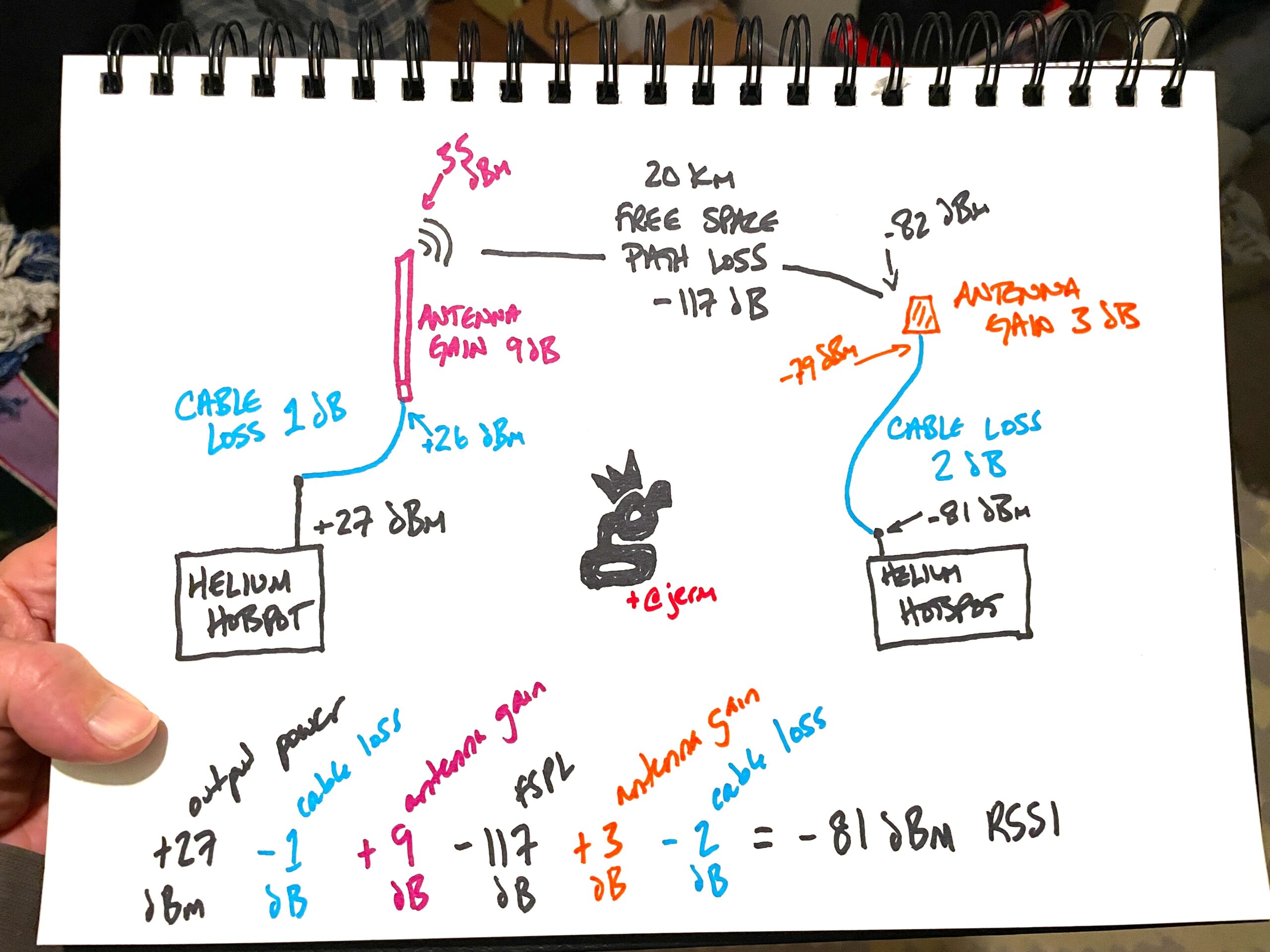

How does that look? Lemme draw ya a picture, and yes, I’ll make up a few numbers.

It starts off with the Hotspot’s Beacon output, loses power along the cable, the antenna shapes and focuses the energy out into space, where energy is lost, the receiving antenna picks it up according to its gain, energy is lost again as it goes down the cable and is finally received in the Witnessing Hotspot.

The ‑81 RSSI dBm that the receiving Hotspot (aka the Witness) reports is then compared against what it should have received at, given how far away it’s asserted from the Beaconing Hotspot as well as the output power for that region.

Now, there’s a problem, because there’s the theory, then there’s the real world, and then there’s our interpretation of what should “count” in the real world. Remember that term “gobsmackingly lax” I used above? Here’s where you start to understand it.

You see, as much as we’d like to think that we humans can accurately assess and calculate the world around us, we’re not always as accurate as we’d like to be. The actual path loss over 20 km may be much higher if there’s vegetation in the way (lookin’ at you, Florida) or buildings (Hi New York!). Since the radio models Helium uses don’t yet take vegetation or buildings or other obstacles into account, the actual results can be much weaker than predictions, sometimes by large margins.

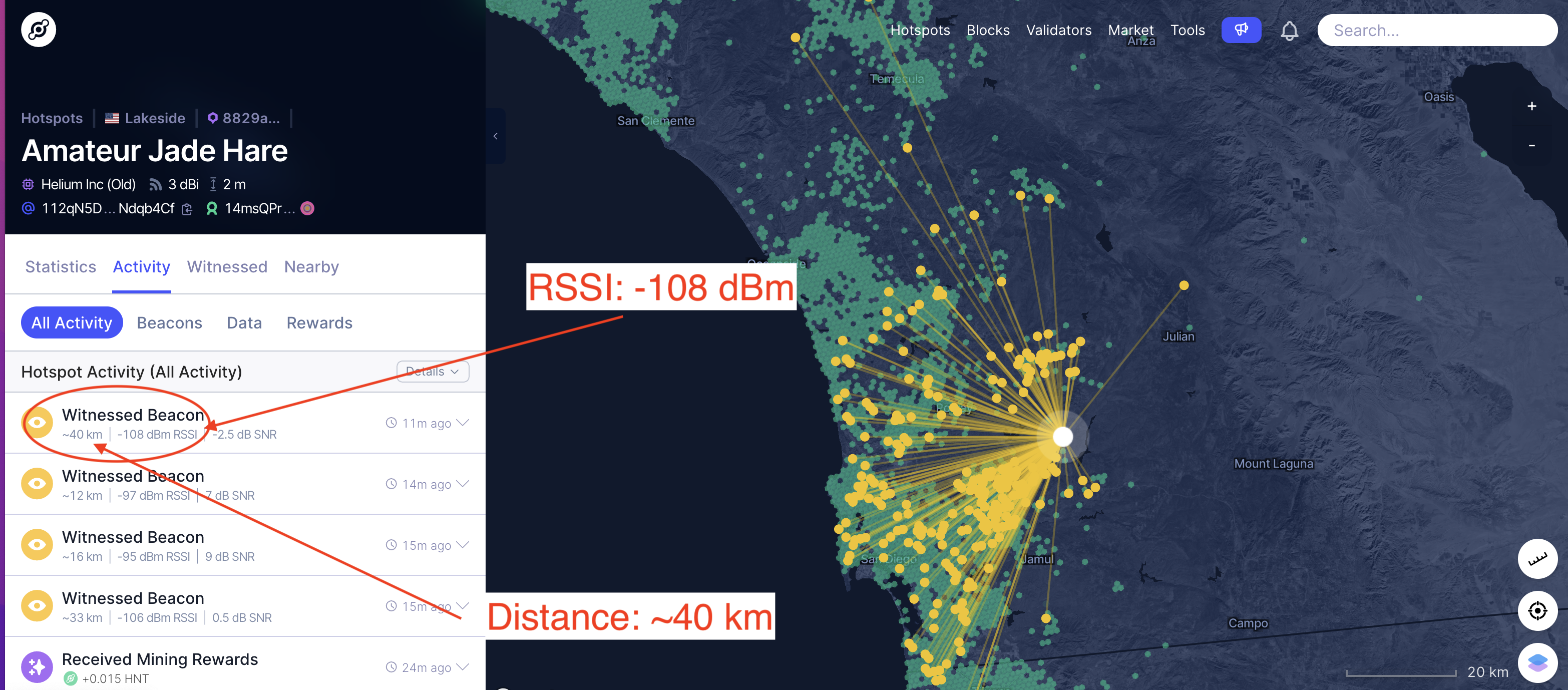

How does it work? Let’s take an example. Here’s Amateur Jade Hare with a 3 dBi HNTenna Witnessing a Beacon from Amusing Eggshell Mongoose, which has an asserted antenna gain of 5.8 dBi and is 40 km away.

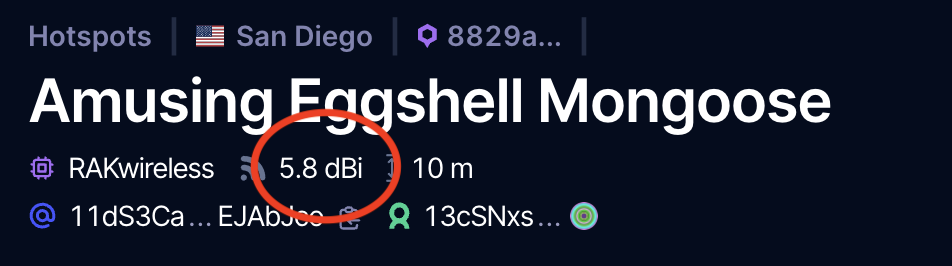

Ok, so that’s cool, but where did I get the info about Amusing Eggshell Mongoose? Right off Explorer.

That could be a 5.8 RAK, or it could be a 6 dBi from McGill with .2 of cable loss. By the way, you can add or subtract all this “dBi, dB, and dBm” stuff interchangeably without worrying about the differences for now. Radio geeks will bristle at that statement, by the way. I guarantee you I’ll get at least one snide comment about how you can’t possibly do that and live. Whatevs.

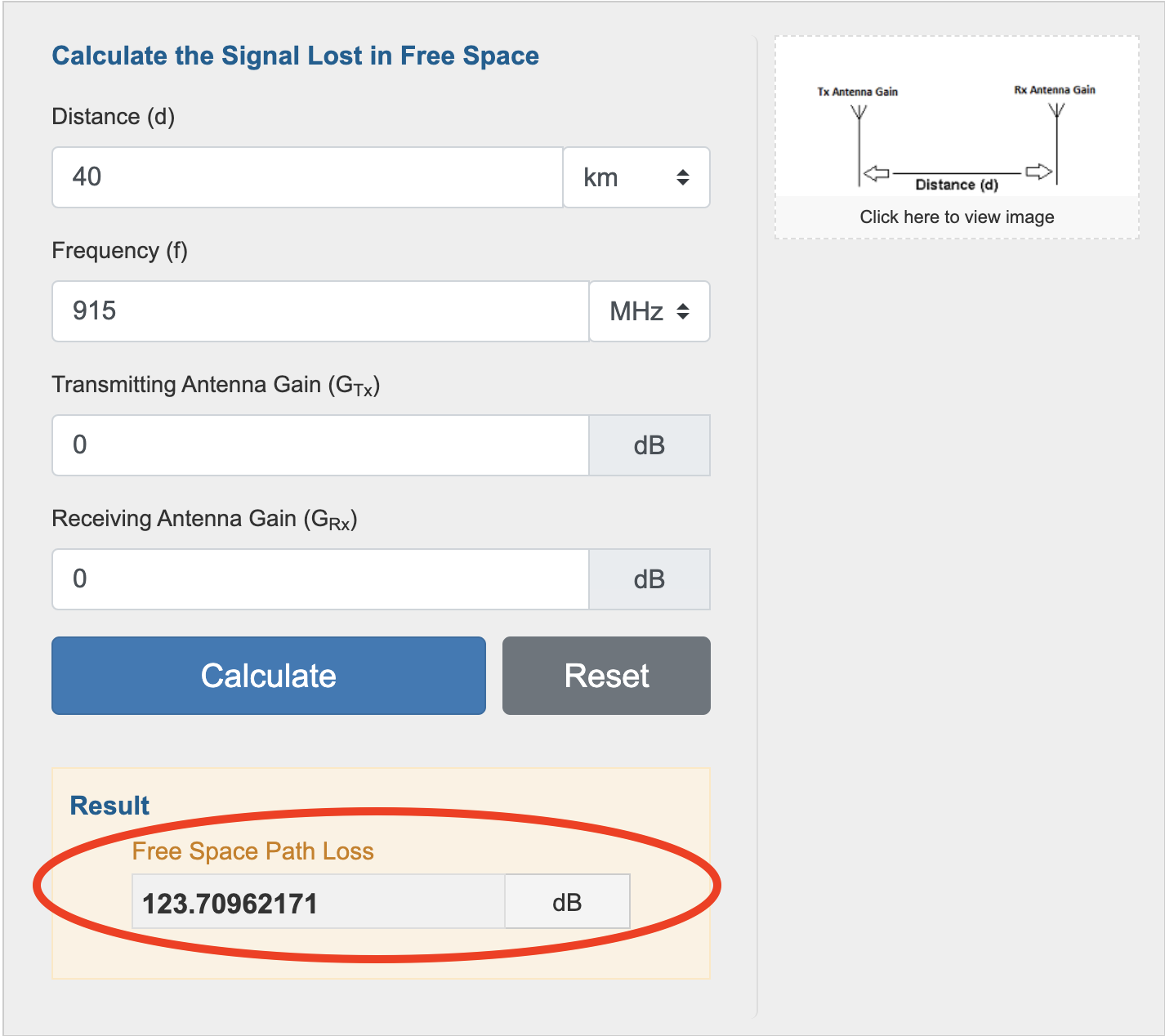

What about the FSPL? I headed over to EverythingRF’s calculator for that, here’s what I got:

I’ll call that 124 to make the math easy. So now we’ve got everything we need.

- Beaconing Hotspot Output (US915) = 27 dBm

- Cable Loss = .-2 dB

- Beaconing Hotspot Antenna Gain/Cable Loss = 6 dBi

- FSPL = ~124 dB

- Receiving Antenna Gain (including cable loss, in this case almost nothing because it’s 4′ of LMR400) = 3 dBi

So what SHOULD the reported RSSI be?

27dBm — .2 + 6 — 124 + 3 = ‑88.2 dBm RSSI

What was the reported RSSI? ‑108 dBm!

That’s almost a 20 dB difference, and it still cleared the line! What does that tell you? It tells ME that it is bloody difficult to correctly assess location based solely on RSSI and current radio modeling. I know for a fact that AJH is where it says it is; it’s my Hotspot. I don’t know about Amusing Eggshell Mongoose, but if it’s a gaming hotspot it’s doing a terrible job of earning. My guess is that AEM is where it says it is.

Where does this leave us? With a thornier problem then when we started, as we now realize that gaming through attenuation is significantly easier than you might have thought, since the leeway is so great. For now, at least you know how to check your RSSI values. If you want to see the equations Helium is using, check their github, starting here. Yeah, it ain’t easy to read.

The solution is for Helium to start ramping up the fspl_factor chain variable, which will clamp down on allowable RSSI and make it more of a real control. Before you start screaming about what Helium should do and how fast they should do it, keep in mind that they are managing an enormous and complex system, and gaming is (these are MY words, not theirs) a relatively small problem compared to keeping the blockchain running.

The great news is that if you’re not an RF geek, none of this matters. Assert your correct antenna gain including cable loss, and focus on what’s actually important, which is WHERE you put your hotspot. Need help with that? Take my Helium Basic Course or the HeliumVision Master Class; I built ’em to help you understand the things that actually matter in Helium.

Rock on!

p.s. Giant thanks to Jeremy Cooper for his help explaining this to me and fact checking my usual hasty assumptions. All mistakes are mine, all righteous accuracy is his. If you want to get an idea of the experience and skill being thrown at this problem, I strongly recommend you check out the interview we did on YouTube.

Additional Ultra Geeky Thoughts from @Jerm

Although most US hotspots can and do use a conducted power of 27 dBm when transmitting, a few things can make it different:

- Manufacturer uses a radio that can’t output 27 dBm.

- The blockchain has asked the radio to transmit at a lower power due to EIRP limits, but the radio can’t do that specific power, so it chooses the next lower level. Example: A 10 dBi antenna in the US would require a +26 dBm transmit. If the card can’t do +26 dBm it might do +25 dBm.

When either of these occur, the actual transmitted power the hotspot used is reported on the blockchain. This means that the average user just relying on Explorer may not see it, but the precise data is entered (and used) by the blockchain.

Leave a Reply